What is Kubernetes (K8s)? A Kubernetes Basics Tutorial

In this post, we’re going to explain Kubernetes, also known as K8s. This is the introduction to our Kubernetes Guide, which offers 20+ articles in tutorials. (Explore the guide using the right-hand menu.) In this introduction, we’ll cover:

This article assumes you are new to Kubernetes and want to get a solid understanding of its concepts and building blocks.

To begin to understand the usefulness of Kubernetes, we have to first understand two concepts: immutable infrastructure and containers.

Armed with those concepts, we can now define Kubernetes as a container or microservice platform that orchestrates computing, networking, and storage infrastructure workloads. Because it doesn’t limit the types of apps you can deploy (any language works), Kubernetes extends how we scale containerized applications so that we can enjoy all the benefits of a truly immutable infrastructure. The general rule of thumb for K8S: if your app fits in a container, Kubernetes will deploy it.

By the way, if you’re wondering where the name “Kubernetes” came from, it is a Greek word, meaning helmsman or pilot. The abbreviation K8s is derived by replacing the eight letters of “ubernete” with the digit 8.

The Kubernetes Project was open-sourced by Google in 2014 after using it to run production workloads at scale for more than a decade. Kubernetes provides the ability to run dynamically scaling, containerised applications, and utilising an API for management. Kubernetes is a vendor-agnostic container management tool, minifying cloud computing costs whilst simplifying the running of resilient and scalable applications.

Kubernetes has become the standard for running containerised applications in the cloud, with the main Cloud Providers (AWS, Azure, GCE, IBM and Oracle) now offering managed Kubernetes services.

To begin understanding how to use K8S, we must understand the objects in the API. Basic K8S objects and several higher-level abstractions are known as controllers. These are the building block of your application lifecycle.

Basic objects include:

Controllers, or higher-level abstractions, include:

A specific part of a previously monolithic application. A traditional micro-service based architecture would have multiple services making up one, or more, end products. Micro services are typically shared between applications and makes the task of Continuous Integration and Continuous Delivery easier to manage. Explore the difference between monolithic and microservices architecture.

Typically a docker container image – an executable image containing everything you need to run your application; application code, libraries, a runtime, environment variables and configuration files. At runtime, a container image becomes a container which runs everything that is packaged into that image.

A single or group of containers that share storage and network with a Kubernetes configuration, telling those containers how to behave. Pods share IP and port address space and can communicate with each other over localhost networking. Each pod is assigned an IP address on which it can be accessed by other pods within a cluster. Applications within a pod have access to shared volumes – helpful for when you need data to persist beyond the lifetime of a pod. Learn more about Kubernetes Pods.

Namespaces are a way to create multiple virtual Kubernetes clusters within a single cluster. Namespaces are normally used for wide scale deployments where there are many users, teams and projects.

A Kubernetes replica set ensures that the specified number of pods in a replica set are running at all times. If one pod dies or crashes, the replica set configuration will ensure a new one is created in its place. You would normally use a Deployment to manage this in place of a Replica Set. Learn more about Kubernetes ReplicaSets.

A way to define the desired state of pods or a replica set. Deployments are used to define HA policies to your containers by defining policies around how many of each container must be running at any one time.

Coupling of a set of pods to a policy by which to access them. Services are used to expose containerised applications to origins from outside the cluster. Learn more about Kubernetes Services.

A (normally) Virtual host(s) on which containers/pods are run.

A K8S cluster is made of a master node, which exposes the API, schedules deployments, and generally manages the cluster. Multiple worker nodes can be responsible for container runtime, like Docker or rkt, along with an agent that communicates with the master.

These master components comprise a master node:

Out of the box, K8S provides several key features that allow us to run immutable infrastructure. Containers can be killed, replaced, and self-heal automatically, and the new container gets access to those support volumes, secrets, configurations, etc., that make it function.

These key K8S features make your containerized application scale efficiently:

Kubernetes can do a lot of cool, useful things. But it’s just as important to consider what Kubernetes isn’t capable of:

K8s is not opinionated with these things simply to allow us to build our app the way we want, expose any type of information and collect that information however we want.

Of course, Kubernetes isn’t the only tool on the market. There are a variety, including:

Overall, Kubernetes offers the best out-of-the-box features along with countless third-party add-ons to easily extend its functionality.

Typically, you would install Kubernetes on either on premise hardware or one of the major cloud providers. Many cloud providers and third parties are now offering Managed Kubernetes services however, for a testing/learning experience this is both costly and not required. The easiest and quickest way to get started with Kubernetes in an isolated development/test environment is minikube.

Installing K8S locally is simple and straightforward. You need two things to get up and running: Kubectl and Minikube.

With these, you can start deploying your containerized apps to a cluster locally within just a few minutes. For a production-grade cluster that is highly available, you can use tools such as:

Minikube allows you to run a single-node cluster inside a Virtual Machine (typically running inside VirtaulBox). Follow the official Kubernetes documentation to install minikube on your machine. https://kubernetes.io/docs/setup/minikube/.

With minikube installed you are now ready to run a virtualised single-node cluster on your local machine. You can start your minikube cluster with;

$ minikube start

Interacting with Kubernetes clusters is mostly done via the kubectl CLI or the Kubernetes Dashboard. The kubectl CLI also supports bash autocompletion which saves a lot of typing (and memory). Install the kubectl CLI on your machine by using the official installation instructions https://kubernetes.io/docs/tasks/tools/install-kubectl/.

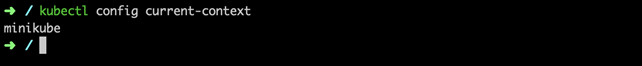

To interact with your Kubernetes clusters you will need to set your kubectl CLI context. A Kubernetes context is a group of access parameters that defines which users have access to namespaces within a cluster. When starting minikube the context is automatically switched to minikube by default. There are a number of kubectl CLI commands used to define which Kubernetes cluster the commands execute against.

$ kubectl config get-context $ kubectl config set-context <context-name>

$ Kubectl config delete-context <context-name>

So far you should have a local single-node Kubernetes cluster running on your local machine. The rest of this tutorial is going to outline the steps required to deploy a simple Hello World containerised application, inside a pod, with an exposed endpoint on the minikube node IP address. Create the Kubernetes deployment with;

$ kubectl run hello-minikube --image=k8s.gcr.io/

echoserver:1.4 --port=8080

We can see that our deployment was successful so we can view the deployment with;

$ kubectl get deployments

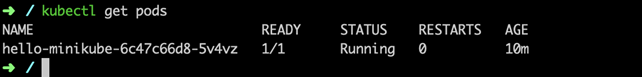

Our deployment should have created a Kubernetes Pod. We can view the pods running in our cluster with;

$ kubectl get pods

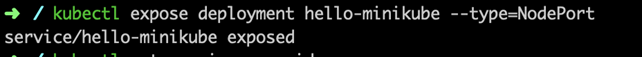

Before we can hit our Hello World application with a HTTP request from an origin from outside our cluster (i.e. our development machine) we need to expose the pod as a Kubernetes service. By default, pods are only accessible on their internal IP address which has no access from outside the cluster.

$ kubectl expose deployment hello-minikube --

type=NodePort

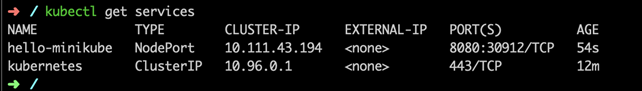

Exposing a deployment creates a Kubernetes service. We can view the service with:

$ kubectl get services

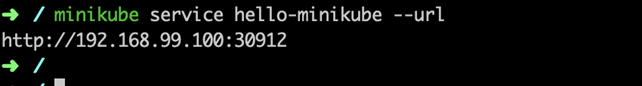

When using a cloud provider you would normally set —type=loadbalancer to allocate the service with either a private or public IP address outside of the ClusterIP range. minikube doesn’t support load balancers, being a local development/testing environment and therefore —type=NodePort uses the minikube host IP for the service endpoint. To find out the URL used to access your containerised application type;

$ minikube service hello-minikube -—url

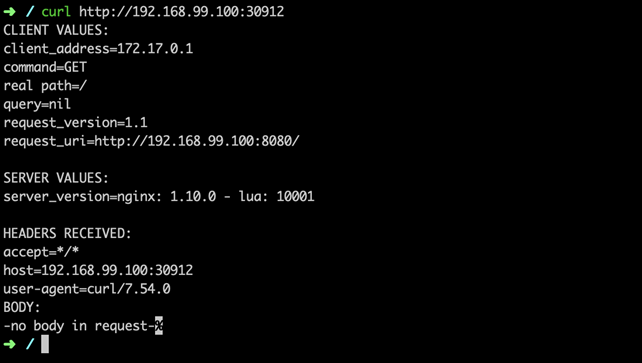

Curl the response from your terminal to test that our exposed service is reaching our pod.

$ curl http://<minikube-ip>:<port>

Now we have made a HTTP request to our pod via the Kubernetes service, we can confirm that everything is working as expected. Checking the the pod logs we should see our HTTP request.

$ kubectl logs hello-minikube-c8b6b4fdc-sz67z

To conclude, we are now running a simple containerised application inside a single-node Kubernetes cluster, with an exposed endpoint via a Kubernetes service.

Minikube is great for getting to grips with Kubernetes and learning the concepts of container orchestration at scale, but you wouldn’t want to run your production workloads from your local machine. Following the above you should now have a functioning Kubernetes pod, service and deployment running a simple Hello World application.

From here, if you are looking to start using Kubernetes for your containerized applications, you would be best positioned looking into building a Kubernetes Cluster or comparing the many Managed Kubernetes offerings from the popular cloud providers.

For more on Kubernetes, explore these resources: